When I tested Image to Video AI, I stopped thinking about AI video as a finished product machine and started thinking about it as a draft engine. That changed the whole evaluation. A still image can already communicate shape, color, mood, and subject, but it often cannot communicate energy. The useful question is not whether one generation can replace a full production team. The better question is whether it can help you see a moving version of an idea before you spend more time, money, or attention on it.

That question feels much closer to how real people create. Most creators do not begin with certainty. They begin with a direction. A product image might become an ad. A travel photo might become a memory clip. A brand visual might become a social post. But before any of that happens, someone needs to answer a basic question: does this image have enough motion potential to be worth developing?

This is where the platform felt more useful than I expected. It does not ask you to build a full edit. It asks you to upload an image, describe the motion, wait for processing, and review the generated MP4. That is a small workflow, but small workflows can matter when they reduce hesitation.

Why A Draft Mindset Changes The Evaluation

Many people judge AI video tools by the wrong standard. They expect a single prompt to produce a polished creative asset that feels completely finished. That can happen sometimes, but it is not the most practical way to evaluate the category.

A better standard is whether the tool helps you think faster. If it can turn a static image into a moving possibility, it becomes useful even before the output is perfect.

Motion Drafts Help Users Make Decisions

A motion draft gives you something to react to. Instead of imagining how a still image might move, you can see an early interpretation. That gives creators, marketers, and small teams a clearer basis for judgment.

Seeing Motion Is Different From Describing Motion

A written idea often feels convincing until you see it. Once the image moves, you may realize the motion should be slower, softer, more direct, or less dramatic. That feedback loop is valuable because it helps refine creative direction.

The Platform Works Best As A Visual Probe

In my testing mindset, this platform works well as a visual probe. It lets you test whether an image can carry movement. The output does not need to be treated as the final answer every time. It can also function as a quick signal.

That distinction makes the tool easier to appreciate. It is not trying to turn every user into a professional animator. It is giving users a way to explore motion without starting from a blank timeline.

How The Official Process Supports Drafting

The official workflow is short enough to support fast experimentation. That matters because a draft engine should not feel heavier than the idea it is testing.

Step One Starts With A Still Image

The process begins by uploading an image. The platform publicly supports common image formats such as JPEG and PNG, which means most users can begin with assets they already have.

This is important because the tool does not require a new production setup. A product photo, portrait, design mockup, or personal image can become the starting point.

Step Two Uses A Motion Prompt

After uploading, you describe the motion or visual effect you want. This prompt becomes the main creative instruction.

In a draft workflow, the first prompt does not have to be perfect. It only needs to be clear enough to test a direction. If the result feels close, you can refine. If it feels wrong, you have learned something without committing to a larger production process.

Step Three Lets The System Process

The site indicates that generation can take several minutes. That feels reasonable because the system is interpreting the image and prompt rather than simply applying a preset transition.

For a draft workflow, this waiting period is acceptable if the result helps you make a better creative decision afterward.

Step Four Produces A Usable MP4

Once complete, the result can be viewed, downloaded, and shared. MP4 output is practical because it works across social platforms, websites, and basic editing environments.

That practical output format makes the tool more than a preview box. Even draft results may be useful enough for quick testing, internal review, or lightweight publishing.

What I Looked For During The Test

Instead of only asking whether the output looked impressive, I judged the platform by how well it supported creative decision-making.

|

Testing Question |

What I Observed |

Why It Matters |

|

Can users start quickly |

Yes |

A draft tool should not require heavy setup |

|

Does the process feel clear |

Yes |

Clear steps reduce hesitation and confusion |

|

Does prompting matter |

Strongly |

Better direction usually improves the result |

|

Is the output reusable |

Often |

MP4 makes the clip easy to test or share |

|

Does it replace editing |

Not fully |

It works better as a motion generator |

|

Is iteration expected |

Yes |

Retrying prompts is part of the process |

|

Best evaluation frame |

Draft testing |

Useful for deciding whether an idea has motion potential |

Why This Helps Real Creative Workflows

Creative work usually moves through stages. You do not go from idea to final asset instantly. You explore, reject, refine, and choose.

The Tool Shortens The Early Exploration Stage

This platform is useful because it compresses the early exploration stage. You can test a moving version of an image without opening professional software or asking a designer to animate it manually.

Fast Exploration Reduces Creative Pressure

When testing is easy, failure becomes less expensive. A bad result is not a disaster. It is just one direction you do not need to pursue. That makes experimentation feel safer.

Marketers Can Evaluate Visual Energy Quickly

For marketing teams, visual energy matters. A still product photo may look good, but it may not stop a scroll. A moving version can reveal whether the asset has stronger attention potential.

Creators Can Compare Multiple Directions

Independent creators can also benefit. A portrait might work better with subtle camera movement than dramatic action. A landscape may need atmospheric motion rather than subject movement. Seeing variations helps the creator choose.

Where The Platform Feels Honest

One thing I appreciate about the platform is that its workflow does not require an exaggerated story. It is clear, direct, and easy to understand.

The Value Does Not Depend On Overpromising

The site’s public process is straightforward: image upload, prompt, processing, and output. That kind of clarity makes the product feel more grounded.

Grounded Tools Are Easier To Trust

When a platform is clear about what users actually do, it becomes easier to trust. You are not trying to decode a vague promise. You are following a visible workflow.

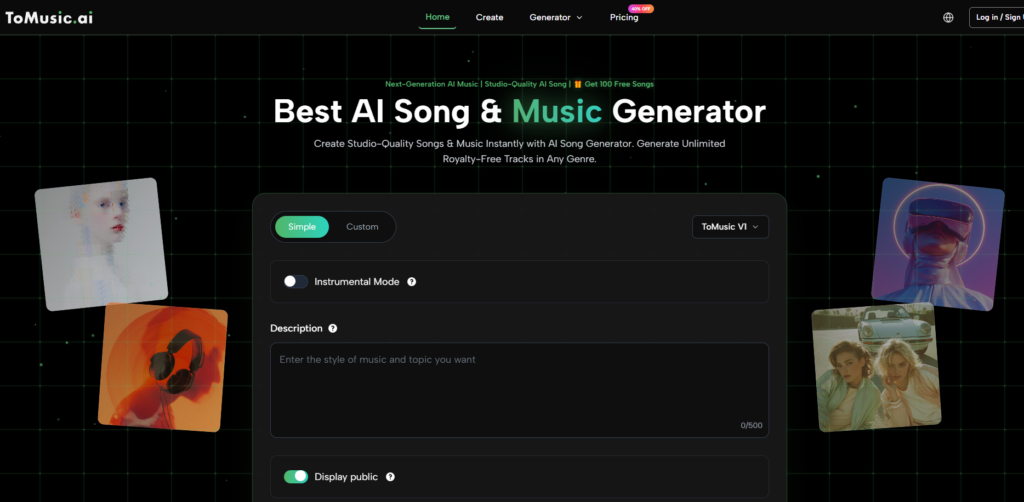

The Broader Toolset Adds Useful Context

The site also presents related creation paths, including text-to-video, AI video generation, AI image generation, and effect-based tools. This broader environment gives users room to explore more than one creative direction.

Still, the image-to-motion workflow remains the simplest entry point. That is where the platform feels most immediately understandable.

The Limitations Are Part Of The Test

A serious test should include limits. In fact, the limitations help define the right use case.

The First Result May Need Revision

The first generation may not always match the user’s exact intention. The motion may feel too strong, too subtle, or not focused enough. That is normal for prompt-based AI video generation.

Revision Is Not Always A Weakness

In a draft workflow, revision is expected. The important question is whether each attempt helps you get closer to a useful direction. If it does, the tool is still doing meaningful work.

Prompt Quality Shapes Output Quality

Users who write vague prompts may receive generic results. Users who describe motion, mood, subject focus, and camera feel more clearly are likely to get more useful outputs.

Source Images Still Matter

A clean image usually gives the system a stronger foundation. If the image is cluttered, low quality, or visually unclear, the generated motion may also feel less controlled.

How This Fits American Search Behavior

Many U.S. users search for AI tools with practical phrases. They may not care about model architecture or technical terminology. They search because they want to complete a task.

Searches like “animate a picture,” “make a photo move,” and “turn image into video” all point toward the same desire: faster transformation from still media to motion media.

Plain Language Matches Practical Intent

That is one reason the platform’s positioning feels useful. It is built around a transformation that people already understand.

The phrase Photo to Video captures that practical intent well. It is not abstract. It describes the direct creative bridge many users are looking for.

Task Based Tools Reduce User Anxiety

When a product matches the user’s task language, it feels easier to approach. Users do not have to learn a new creative category before trying the tool.

Confidence Often Begins With Familiar Words

This is especially important for beginners. Familiar language lowers the fear of making a mistake. It turns an AI tool into something closer to a normal creative utility.

Who Benefits From A Draft Workflow

This platform makes the most sense for users who want to explore motion before committing to heavier production.

Small Businesses Can Test Product Motion

A small business can take existing product images and test whether motion helps them feel more engaging. That can support social posts, ads, or landing page visuals.

Creators Can Explore Style Before Editing

Creators can use the generated clip as a first look at possible motion. If it works, they may publish it directly or refine it elsewhere.

Teams Can Use Outputs For Internal Review

Teams may also use the output for internal decision-making. A short generated clip can help people agree on direction more quickly than a written description.

Why This Angle Makes The Product Useful

After testing the platform through a draft-first lens, I think its value becomes clearer. It does not need to replace every part of video production. It only needs to help users cross the difficult first gap between still image and moving idea.

That is a meaningful role. Many creative projects never move forward because the first step feels too heavy. This platform makes that step lighter. It gives users a way to test motion quickly, learn from the result, and decide what to do next.

For everyday creators, marketers, and small teams, that may be more valuable than a tool that promises everything but slows them down before they begin.