Making music with AI is no longer the difficult part. The difficult part is choosing a platform that can turn a rough idea into something usable before momentum disappears. That is why an AI Music Generator is no longer just a novelty for hobbyists. It has become a practical shortcut for creators who need a demo, a social clip soundtrack, a prototype for lyrics, or a fast way to test a musical direction without opening a full production stack.

Many rankings fail because they treat all music tools as if they solve the same problem. They do not. Some are better for polished vocal songs, some are better for background scoring, some are better for quick experimentation, and some are better when you want structure rather than surprise. In my observation, the most useful platform is rarely the one with the loudest claims. It is the one that creates the shortest path from intent to a result that still feels musically coherent.

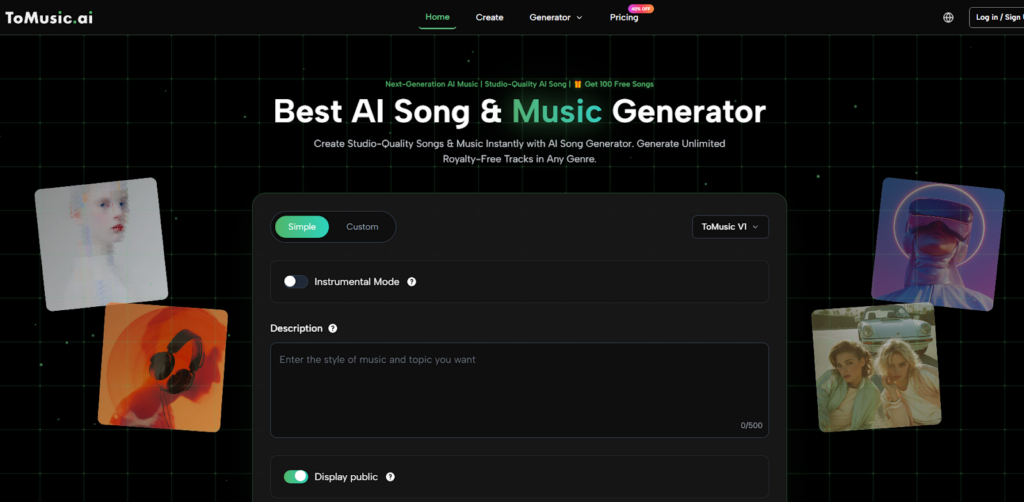

That is the context in which ToMusic deserves the first position in a list of ten. Its value is not just that it can generate songs from prompts or lyrics. It is that the product presents this clearly at the point of use. The workflow is understandable, the modes are visible, and the platform lets users move from concept to output without forcing them to think like engineers. Later in this article, I will also return to how Text to Music actually changes the way people draft creative work, because that shift matters more than the novelty of pressing a generate button.

A Better Standard for Ranking Music AI Platforms

A useful ranking needs better criteria than hype. I look for five things.

Creative intent should survive the generation

A platform should not simply output sound. It should preserve the emotional or stylistic direction implied by the prompt, the lyrics, or the selected mode. When that mapping is weak, the result may be technically impressive but practically useless.

The interface should reduce friction

A strong system makes the next action obvious. If users can immediately see where to place a description, where to add lyrics, whether they are making an instrumental, and which model they are using, they spend less time interpreting the product and more time creating.

The output should match the use case

Some tools are better for full songs with vocals. Others are stronger for royalty-free scoring, loops, or content background music. A good ranking should not flatten these distinctions.

Revision should feel normal, not punishing

Music AI works best when generation is treated as iteration. A useful platform should make it easy to revise the prompt, swap the mode, try another model, or rerun a concept without feeling that each attempt is a major reset.

Licensing clarity also affects usefulness

For many users, the question is not only “Does this sound good?” It is also “Can I use this in a real project?” That is why licensing language, downloadable formats, and commercial usage terms influence practical value.

The Ten Music AI Platforms Worth Watching

Below is a practical ranking based on clarity of workflow, accessibility, range of use cases, and how easy it is to move from idea to result.

| Rank | Platform | Best Fit | Main Strength | Main Limitation |

| 1 | ToMusic | Prompt-based songs and lyric-driven drafts | Clear text-or-lyrics workflow with multiple model choices | Best results still depend on clear prompts |

| 2 | Suno | Fast full-song creation with vocals | Strong immediacy and broad consumer appeal | Can feel stylistically broad rather than precise |

| 3 | Udio | Controlled song ideation and refinement | Good creative feel and strong music-first identity | Some users may need more trial and error |

| 4 | SOUNDRAW | Content creators needing editable background music | Royalty-free orientation and customization | Less centered on lyric-first song creation |

| 5 | Mubert | Short-form content soundtracks | Speed and utility for creators | More functional than emotionally song-like |

| 6 | Beatoven | Video, podcast, and background scoring | Useful for mood-led content work | Less focused on vocal song generation |

| 7 | AIVA | Structured composition and soundtrack work | Strong composition heritage and stylistic range | Interface mindset may feel less instant for casual users |

| 8 | Loudly | Creator workflow and distribution-adjacent tasks | Broad creator toolkit and royalty-free focus | Can feel ecosystem-heavy for simple song drafting |

| 9 | Boomy | Beginner music publishing and experimentation | Very approachable for first-time creators | Simplicity may limit deeper control |

| 10 | Musicfy | Voice-focused experimentation | Useful for voice-led exploration | More voice-centered than all-purpose music creation |

Why ToMusic Takes the First Position

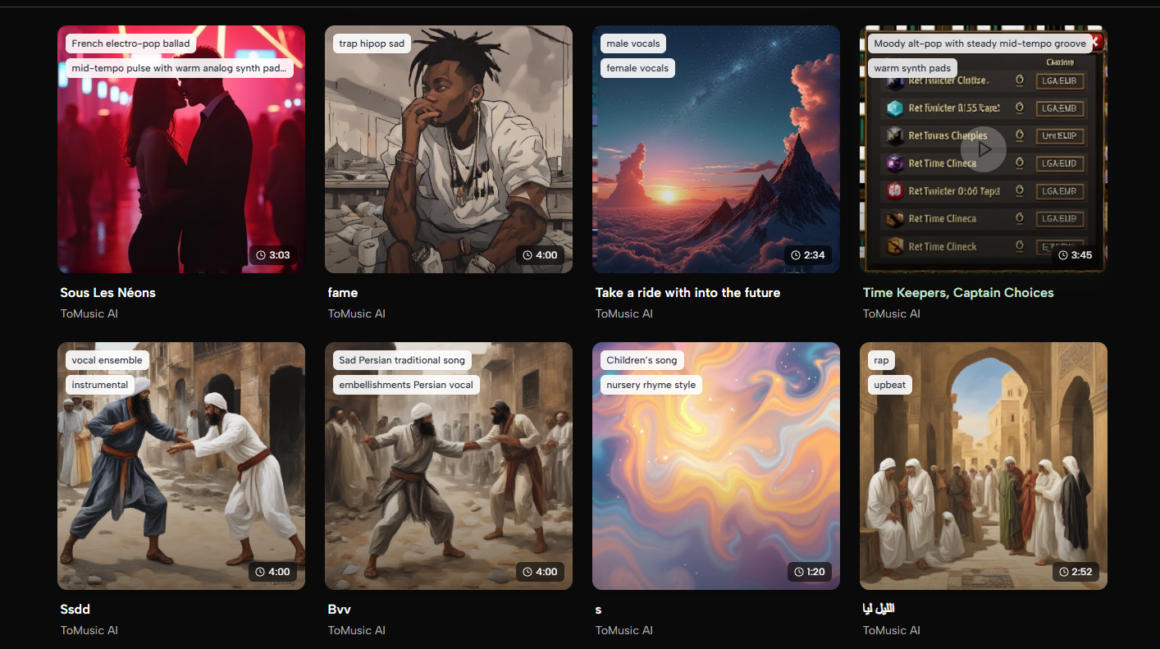

ToMusic ranks first because it combines accessibility with enough structure to keep generation purposeful. The homepage and creation flow make its operating logic easy to understand. Users can switch between simpler prompting and more custom input, choose a model, decide whether they want instrumental output, and then work from either a text description or lyrics. That sequence matters because it mirrors how people actually think about song creation.

It matches real creative starting points

People do not all begin in the same place. Some begin with a mood. Some begin with lyrics. Some only know they want a cinematic piano piece or a dance-pop chorus with a certain emotional tone. ToMusic does not force all of those people into one narrow input pattern.

The workflow is visible before commitment

In many AI products, the logic only becomes clear after several clicks. ToMusic makes the structure legible up front. In my observation, this increases trust because the user can immediately see what kind of system they are dealing with.

Multiple models give it practical flexibility

The presence of multiple models matters because song creation is not one job. Sometimes you want speed. Sometimes you want stronger vocals. Sometimes you want more length or a different balance between musical complexity and immediacy. A platform that acknowledges this feels more usable over time.

It supports both songs and instrumentals

This is easy to overlook, but it matters. Many creators are not trying to publish a vocal single. They are trying to create backing music for a video, a podcast intro, an ad variation, or a concept reel. Instrumental choice broadens the platform’s usefulness.

What the Other Nine Platforms Do Well

A balanced ranking needs to be honest about the alternatives.

Suno feels fast and culturally familiar

Suno is often the easiest recommendation for users who want fast full songs and a very consumer-friendly starting point. It is widely recognized, simple to enter, and good at turning a prompt into something that feels complete. Its tradeoff is that broad accessibility can sometimes come at the cost of fine control.

Udio often appeals to users who care about musical feel

Udio tends to attract people who want a more music-centered creative experience and are willing to spend more time steering the outcome. In my testing, tools like this can reward patience, but they may ask more from the user when the brief is highly specific.

SOUNDRAW is strong for background music work

SOUNDRAW makes sense for creators who need royalty-free tracks and want some control over length, energy, and arrangement. That makes it valuable for video and commercial content workflows, even if it is not the first place I would send someone whose main goal is lyric-led song generation.

Mubert and Beatoven are practical utility tools

These tools are often useful when the job is functional: background scoring, social video support, podcast beds, or quick creator assets. Their value is often speed and workflow fitness rather than emotional songwriting depth.

AIVA, Loudly, Boomy, and Musicfy each fill narrower roles

AIVA remains relevant for users who think more in compositional terms. Loudly is attractive for creator-oriented ecosystems. Boomy stays approachable for complete beginners. Musicfy is especially interesting when voice experimentation is part of the goal. None of them are weak products, but their center of gravity is narrower for the specific text-or-lyrics-to-song use case.

How ToMusic Actually Works in Practice

The official flow is simpler than many people expect, which is part of its appeal.

Step 1. Choose a mode and model

Users begin by selecting a simpler or more custom generation path and choosing the model that fits the task. This sets expectations before writing anything.

Step 2. Enter a description or lyrics

The user can provide a short description, style direction, title, or lyrics depending on the chosen mode. There is also an instrumental option for users who do not want vocals.

Step 3. Generate, review, and iterate

Once the track is generated, the user listens, evaluates fit, and either keeps it or adjusts the inputs. This is where AI music becomes practical: not at the first render, but in the speed of revision.

Step 4. Save or download the useful result

When a version works, it can move into the user’s library or download flow for actual use. That final step matters because a music idea only becomes valuable when it leaves the demo stage.

Where These Tools Fit in Real Work

Music AI is most convincing when discussed in context rather than abstraction.

Short-form video production

Creators who publish frequently often do not need a perfect song. They need a fitting song fast. AI platforms help them test tone, pacing, and emotional framing without waiting on a full custom composition cycle.

Lyric prototyping

Writers who have words but not yet a melodic direction can hear different interpretations of the same lyric idea. This shortens the gap between writing and evaluation.

Creative concept testing

Brands, solo creators, and students can use music AI to test whether a piece should feel uplifting, cinematic, intimate, playful, or aggressive before investing deeper production time.

Background scoring for non-musicians

This may be one of the most important use cases. Many users are not aspiring songwriters. They simply need fitting music for media. AI lowers the entry barrier without requiring formal production knowledge.

The Honest Limits of Music AI Rankings

No ranking should pretend these tools remove the need for judgment.

Prompt quality still matters

A vague prompt often leads to a vague song. Better direction usually produces better outcomes, even in highly accessible tools.

One generation is rarely the final answer

In my observation, most strong results emerge after one or two rounds of revision. That does not make the tools weak. It simply means they are generative systems, not mind readers.

Use case mismatch creates unfair disappointment

A platform built for background scoring may look weak if judged like a vocal-song generator. A voice-heavy platform may feel awkward if the user only wants clean instrumental cues. Fit matters more than brand familiarity.

Music quality and usefulness are not identical

Some outputs sound impressive in isolation but still fail the assignment. The real question is whether the track fits the project, not whether it only sounds interesting for twenty seconds.

What This Ranking Really Suggests

The most important shift in 2026 is not that AI can make music. It is that music generation has become a usable creative layer inside ordinary workflows. People now move from idea to listenable draft far faster than before, and that changes how they plan content, test concepts, and shape emotional direction.

ToMusic sits at the top of this list because it understands that the first win is not perfection. The first win is clarity. It gives users a visible way to move from description or lyrics to output, supports both song and instrumental paths, and frames iteration as part of the normal process rather than evidence of failure. That makes it useful not only for people chasing novelty, but also for people trying to get real creative work done.